Installation of LogZilla AI Copilot Module

Download PDFEnable the LogZilla AI Copilot from System Settings, configuring model provider, model name, and API key for OpenAI, Anthropic, Gemini, or self-hosted endpoints

Installation of LogZilla AI Copilot Module

The LogZilla Copilot module is now included in the standard LogZilla installation package, making the setup process much simpler than before.

Prerequisites

- LogZilla instance (minimum version v6.36)

- Access to a suitable LLM provider (OpenAI, Anthropic, Google Gemini, OpenRouter, or a self-hosted OpenAI-compatible endpoint such as vLLM or Ollama)

Enabling the AI Copilot

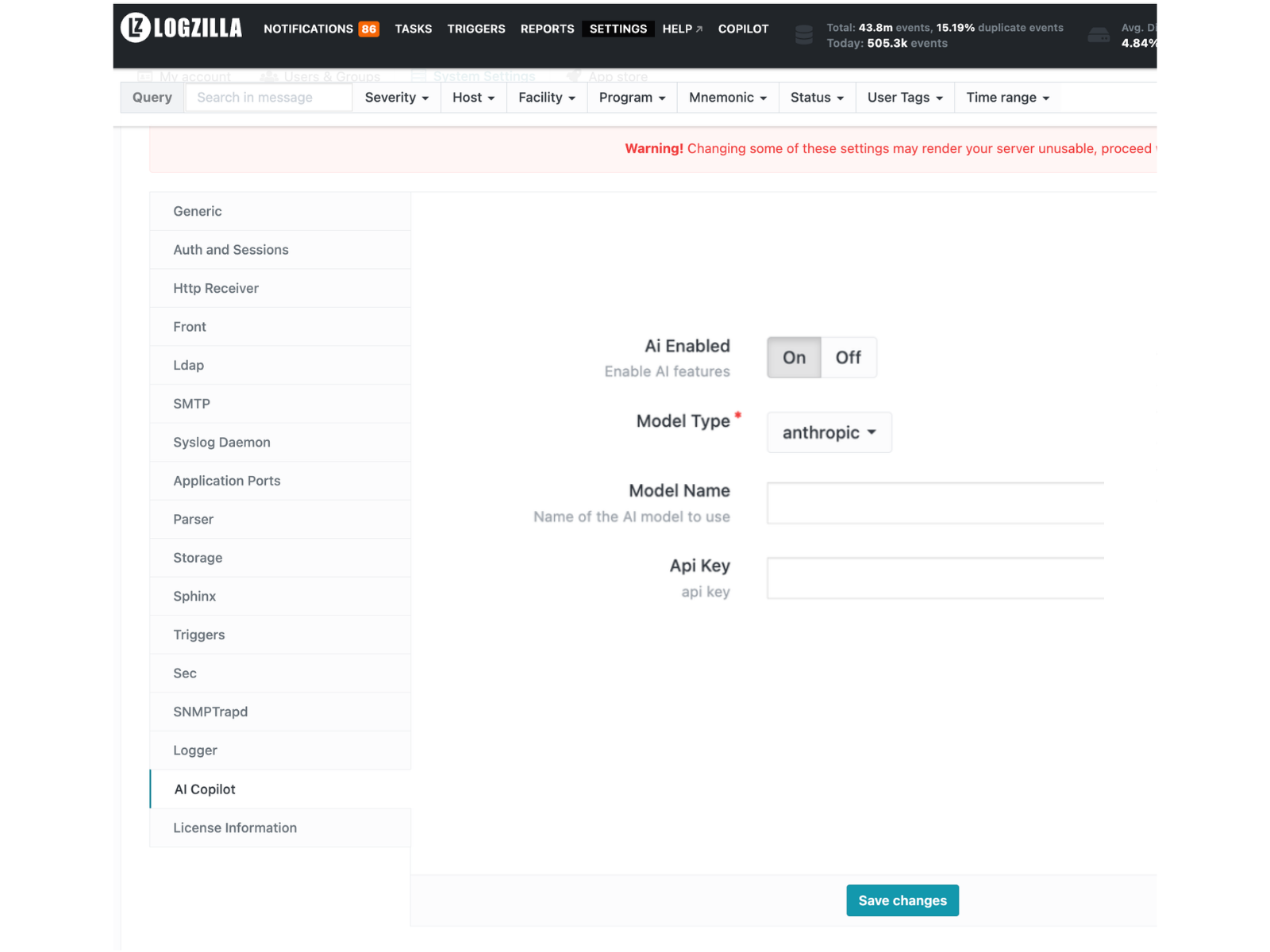

1. Open the AI Copilot settings

From the top navigation, open Settings and select System Settings.

In the sidebar, click AI Copilot.

2. Configure the required fields

Four fields are required to enable the Copilot: Ai Enabled, Model Type, Model Name, and Api Key. The remaining fields are optional and primarily used for tuning or self-hosted deployments.

Required fields:

- Ai Enabled: Set to

Onto activate the AI Copilot feature. - Model Type: Select the AI provider. Supported values are

openai,anthropic,gemini,vllm/ollama, andopenrouter. Thevllm/ollamaoption covers any OpenAI-compatible endpoint, including vLLM and Ollama deployments. - Model Name: The specific model to use. Examples:

gpt-5.2,claude-opus-4-6,claude-sonnet-4-6,llama3. - Api Key: The API key for the selected provider.

- Query Loglevel: Controls logging verbosity for AI query

operations. Defaults to

DISABLED; set toINFOorDEBUGwhen investigating an issue.

Optional fields:

- Api Base Url: Base URL for the AI API provider. Required for

self-hosted deployments such as vLLM or Ollama (for example,

http://host:11434/v1for an Ollama server). - Max Tokens: Maximum number of tokens the model produces per generation. (default: 8192, minimum: 8192)

- Context Window: Maximum number of context tokens the model accepts. Some models require this parameter; for others it is ignored. (default: unset, minimum when set: 100000)

- Request Timeout: Maximum number of seconds to wait for the LLM to respond before the request is considered timed out. (default: 300, range: 30-3600)

3. Save Settings

Click Save changes to apply. The LogZilla UI restarts automatically.

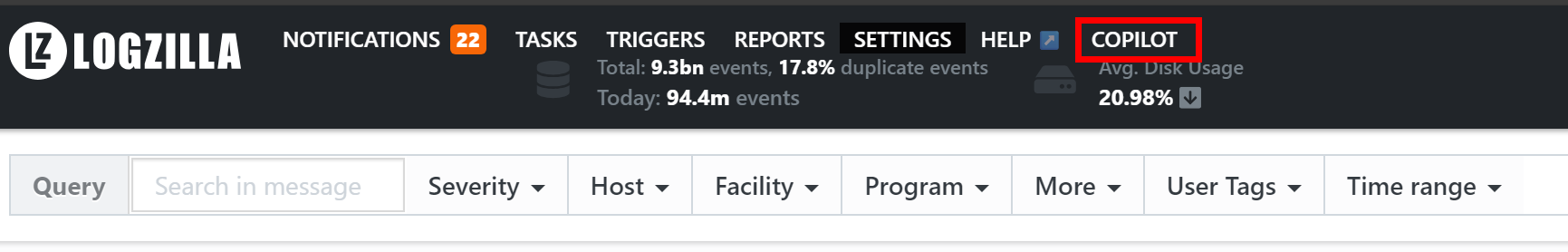

4. Access the AI Copilot

After a few seconds, the Copilot link appears in the top navigation.

Clicking it opens the AI Copilot interface in a new browser tab at the

/ui2/chat-ai path of the LogZilla URL.

Advanced settings

The AI Copilot pane also exposes several advanced fields. These are hidden by default and surface when the "Show advanced settings" toggle is enabled on the Settings page. Most deployments do not need to change them.

- Logzilla Mcp Docs Url: Base URL for the LogZilla MCP

documentation server. Defaults to

https://mcp-docs.logzilla.ai/mcp. - Extra Mcp Urls: Additional MCP servers to make available to the Copilot. Defaults to empty.

- Tracing Enabled: When on, AI request traces are exported to the configured tracing server. Defaults to off.

- Tracing Project Name: Project label applied to exported traces.

Defaults to

lz-ai-tracking. - Tracing Server Url: Endpoint of the external tracing collector.

- Tracing Api Key: API key used when the tracing server requires authentication.

For additional information, consult the LogZilla documentation or contact LogZilla support.